Google accelerates processors to power the intelligent agent economy

Kicking off today is Google Cloud Next 2026, the annual event in Las Vegas where Google's Cloud and AI division showcases the innovations planned for this year

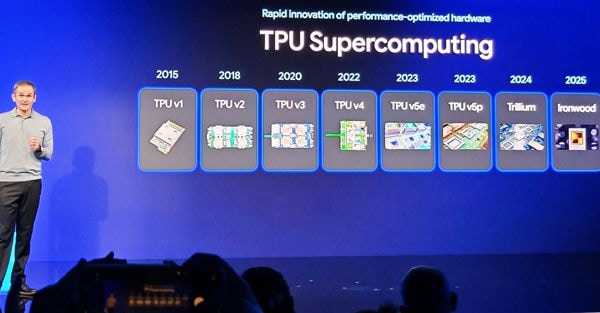

LAS VEGAS - Today sees the start of Google Cloud Next 2026, the annual event in Las Vegas where the Cloud and AI part of Google shows this year's innovations to press, partners, suppliers and customers. Keynotes on business strategy, product presentations and various initiatives are expected to give the market yet another leap forward on AI agents (increasingly in vogue) and data processing in Artificial Intelligence sauce. But yesterday, a presentation dedicated to its two new custom processors belonging to the eighth generation of TPUs was held at the Formula 1 facility in the city of vice. Google Cloud has been designing its data centre processors on its own since 2013, and for some time now the pace of products has intensified, and even the company's CTO, Amin Vahdat, has anticipated that we could see three of them as early as next year if the evolution of the AI sector proceeds at this furious pace. For the time being, however, we have TPU 8T and TPU 8i, born out of the realisation that a single solution can no longer efficiently meet the different needs of the AI lifecycle.

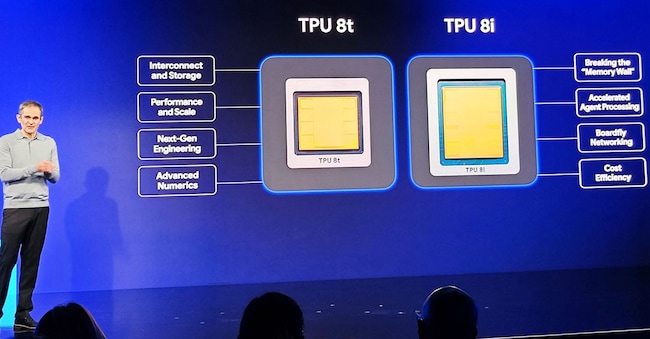

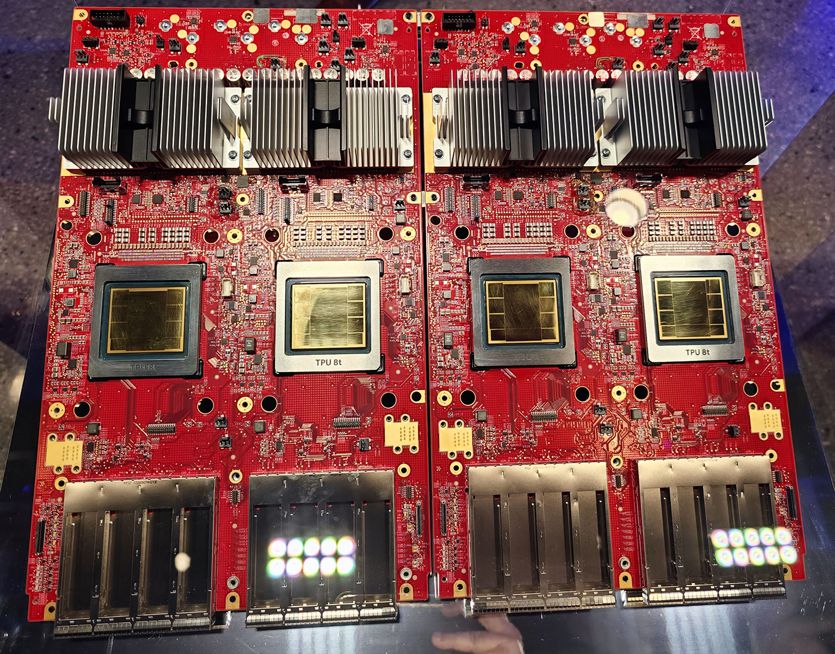

In particular, TPU 8T is designed as the 'power engine' dedicated to the training of large-scale models. TPU 8i, on the other hand, is designed for inference and reasoning, optimising it for the lowest possible latency and real-time responses, which are crucial in the era of AI agents.

According to Vahdat, this separation was necessary because, although training is the dominant workload today, the real value for services like Search, YouTube or Gemini Enterprise is created during the 'serving' (inference) phase.

Key features and generational comparison

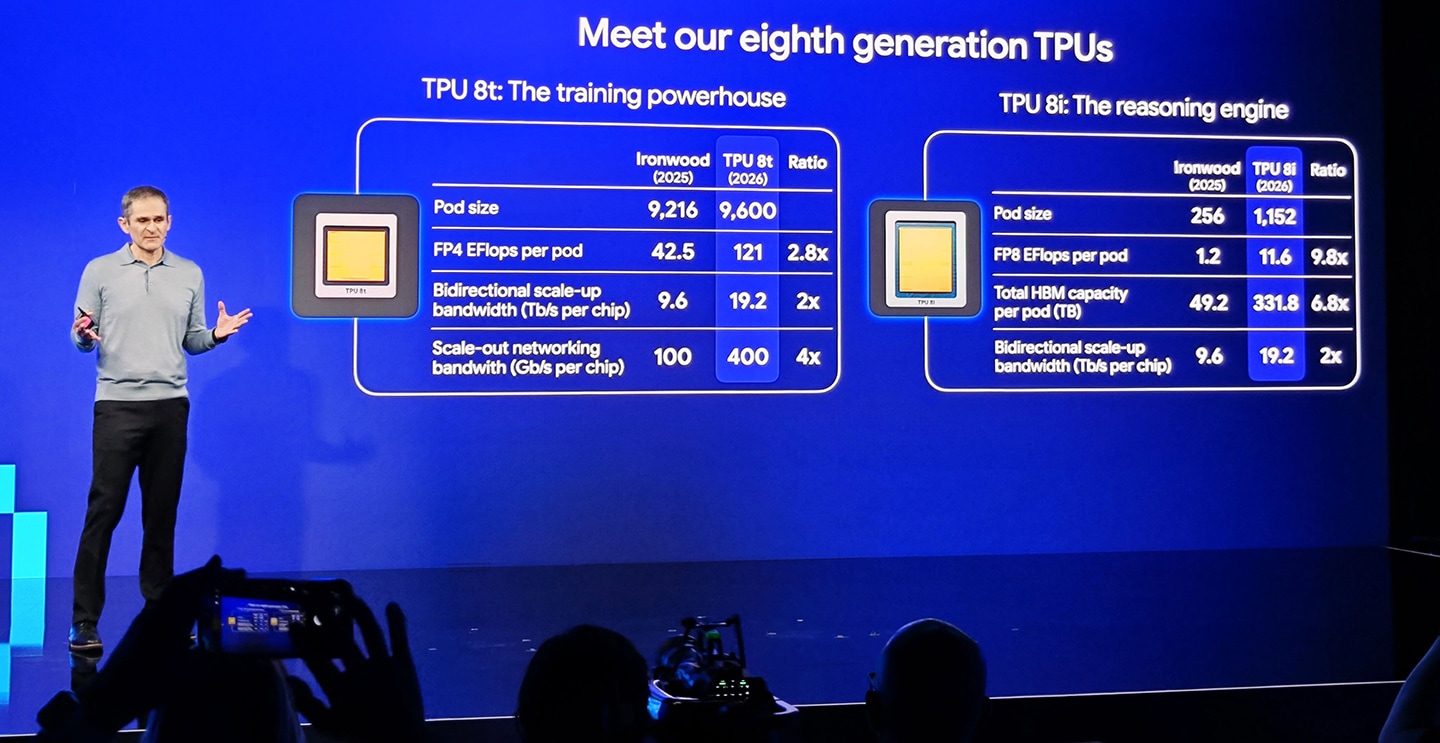

The improvements over the previous generation (Ironwood) are described as 'staggering', with performance being multiplied almost 10 times in some loads. TPU v8T (Training) consists of 9,600 interconnected chips and offers almost three times the floating-point computing power compared to the previous generation. TPU 8i (Inference), on the other hand, blows everyone away with an architecture that quadruples the pod size (now with 1,152 interconnected chips) and offers 10 times the floating-point exaflops compared to the previous chip (which it must be remembered was not optimised for this task, however).

The future of infrastructure: the great return of CPUs

Despite the importance for Google of these announcements, which will enable it to make its services even more effective while containing costs, Vahdat shared a counter-intuitive prediction: Google's decision to produce its own processors optimised for AI loads was born out of the need for brute power in applications where the evolution of CPUs could not keep up with the needs of companies that needed to process huge amounts of data (such as Google and its Web index). Now, Vahdat predicts that CPUs will return to play a key role in the coming years. Although the era of extreme specialisation is continuing, the complexity of AI agents requires a great deal of general-purpose computing for tasks such as orchestration, creating secure sandboxes and executing code, all fields in which CPUs perform very well.