Google Cloud Next 25: from inference chips to updated Ai models

Kicking off the annual event where Google Cloud announces its most important innovations that it will bring to market in the coming months All news

5' min read

5' min read

LAS VEGAS - Google Cloud Next, the annual event at which Google Cloud announces its most important innovations that it will put on the market in the coming months, is about to begin, and 20 minutes before CEO Thomas Kurian's speech, musical entertainment created with AI tools gets underway: music and video have been created using gen-AI tools including the new Veo 2 video creation system. The editing is still human, the direction to be given to tools and frames is human, but the creation of the individual pieces that are fused together is mostly the result of inference. A perfect expression of the AI that creates superpowered professionals capable of doing the work of days in a matter of hours. After all, the thread running through the entire event is precisely that of AI that 'really serves', a myriad of tools capable of changing (for the better) the way people work.

So we start with some numbers. Kurian tells us that Google has 2 million miles of cables running around the world, over 4 million developers using Gemini to code, a steady acceleration in the number of people using Vertex AI, the gen-ai suite for professionals, which has grown twenty-fold in a year, and over 500 success stories of Google Cloud technology applied to businesses. An introduction that anticipates the announcements being made by Sundar Pichai (a curious twist of fate that surname ending in 'AI'), CEO of Google and Alphabet.

And the first is an announcement that 'you don't expect': all those kilometres of cables seem to Pichai almost wasted to run 'only' Google's infrastructure, and it is so powerful that it evidently has enough capacity to be made available to external customers. Therefore, the Cloud Wide Area Network (Cloud WAN) is launched, which makes Google's global private network available to companies. This infrastructure offers up to 40 per cent higher performance than the public Internet, providing fast and reliable connectivity for business operations on a global scale. Access to such a large network enables businesses to improve operational efficiency and offer more responsive services to their customers. Because in the end, that's what it's all about: AI opens up markets and improves efficiency, but it needs infrastructure and Google says it can provide it at a 40 per cent savings over traditional infrastructure.

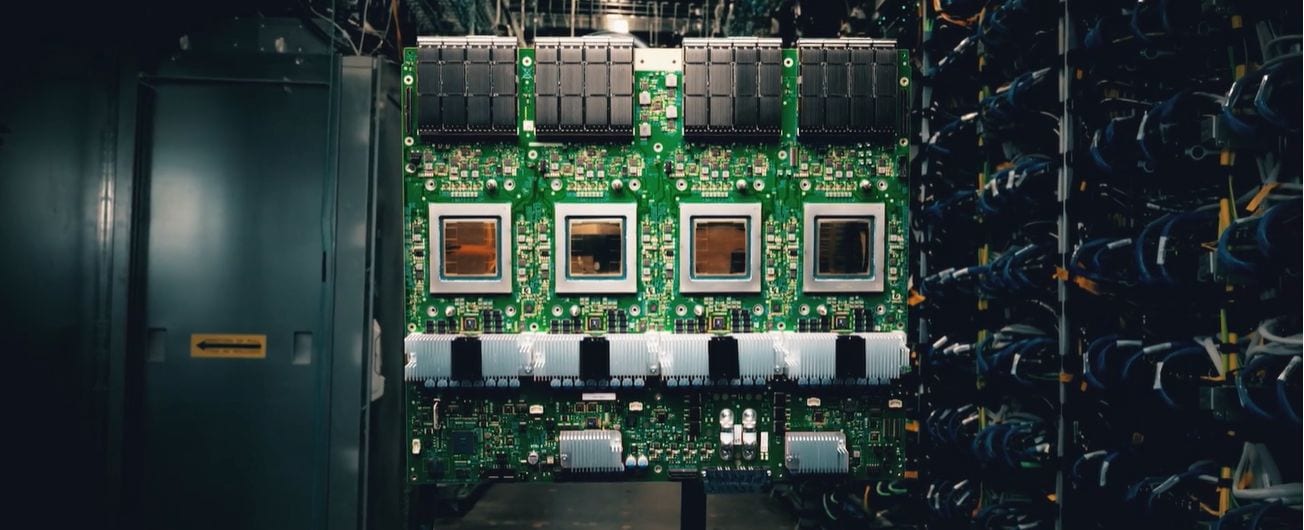

And speaking of infrastructure, we come to the big news: Ironwood, its seventh artificial intelligence processor, designed to accelerate AI applications. This chip is optimised for real-time processing, such as that required for chatbots and virtual assistants, offering twice the performance per unit of energy compared to its predecessor, Trillium. Ironwood represents a step forward in both energy efficiency and computing power. We don't yet know when it will come to market, but now that Pichai has prepared the ground for the infrastructure, we come to the announcements more related to the actual AI.

Gemini 2.5: advanced models for different needs

As part of its family of AI models, Google has announced Gemini 2.5 Flash, which completes the offering following the introduction of Gemini 2.5 Pro at the end of last year. These two models are designed to tackle complex tasks requiring in-depth analysis, such as interpreting legal or medical documents, and Gemini 2.5 Flash is optimised for applications requiring low latency and high efficiency, such as responsive virtual assistants and real-time synthesis tools. The choice gives companies the flexibility to adapt to their specific operational needs as the Flash version costs significantly less than the Pro version per use.