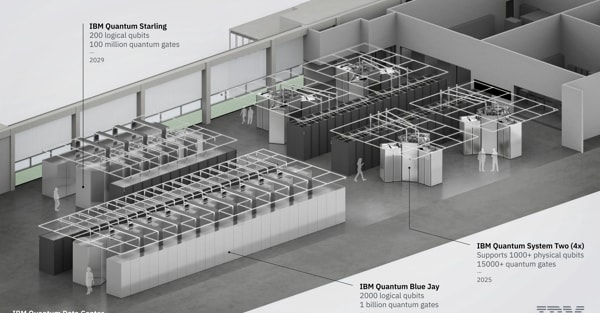

Ibm: first fault-tolerant quantum computing system operational in 2029

Ibm Starling will be born in New York and will be able to run 20,000 times more circuits than current quantum computers.

5' min read

5' min read

This time in the quantum computer race there is a date: 2029. IBM says it has succeeded in overcoming one of the most complex obstacles in quantum computing: error correction. This achievement opens up for the first time a concrete and feasible path towards the construction of the first large-scale, fault-tolerant quantum computer, i.e. one that is capable of autonomously correcting the errors that today limit performance. The system, christened Quantum Starling, will be operational in 2029 at a new dedicated data centre in Poughkeepsie, New York State. The study that grabbed the cover of Nature unveiled a different approach from that taken by rivals Google, AWS and Microsoft. With 200 logical qubits and 100 million quantum operations, Starling will be the first system truly designed to achieve fault tolerance. And as Jerry M. Chow, a researcher at IBM's Thomas J. Watson Research Center in New York, explained to Il Sole 24 Ore, it will be able to execute 20,000 times more circuits than current quantum computers. According to IBM, representing the quantum state of the system would require more memory than the combined 10^48 of the most powerful existing supercomputers.

What's changing in the quantum computing race?

IBM's discovery solves the scalability problem in quantum computing. It is designed to reduce the overhead required for error correction by 90 per cent and represents the first credible path to such a powerful quantum system. The quantum computer race is not over and there are still several intermediate technological steps, but it no longer appears to be a marathon.

What does IBM's discovery consist of?

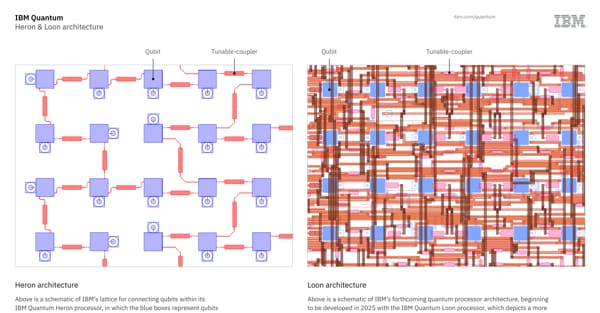

A logical qubit represents a single error-corrected information unit, constructed through the aggregation of physical qubits. The ability to correct errors is central to scaling quantum computing. Without this function, operations become unstable beyond a certain number of cycles.

Essentially, quantum error correction requires the encoding of quantum information into more qubits than we would otherwise need. However, achieving quantum error correction in a scalable and error-proof manner has so far been unattainable without considering scales of a million or more physical qubits.

IBM is aiming for a 90 per cent reduction in the number of physical qubits required through the use of quantum low-density parity check codes (qLDPC), a new form of error coding also recently presented in the journal Nature. The researchers have significantly reduced this overhead and show that error correction is within reach. In essence, they say they have solved the key problem of large-scale quantum error correction using a code (LDPC - Low-Density Parity Check). In practice, each check operation involves only a small number of qubits and each qubit participates in a small number of checks. As the article states, IBM has numerically demonstrated that its BB codes can preserve 12 logical qubits for one million syndrome cycles with a realistic physical error rate of 0.1 per cent - an unprecedented result with such low overhead. Errors in the qubits are detected continuously by a procedure called error syndrome, which checks and corrects. A syndrome cycle is a single check. IBM says it can repeat this check a million times without losing the information in the logic qubits.

-U78725784110JBU-600x313@IlSole24Ore-Web.jpg)