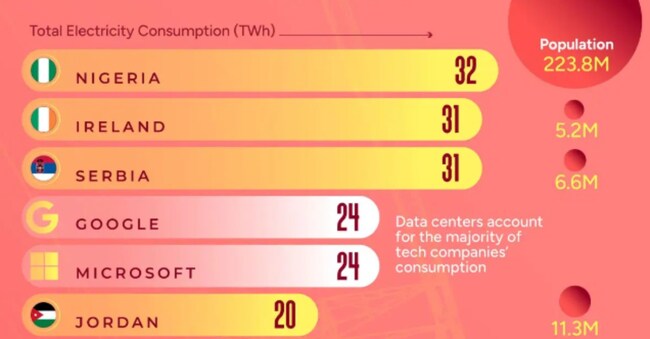

Microsoft and Google consume more energy than Nigeria

Artificial intelligence development raises requirements and drives away carbon neutrality targets for US big tech

3' min read

3' min read

On the one hand, plans - increasingly in the balance - to achieve carbon neutral targets within a few years. On the other, the artificial intelligence boom, which has plunged the technology sector into new energy-hungry logics. And this is where recent studies on the consumption of tech giants come in, precisely in light of the AI explosion. Studies that have found that by 2023, for example, Google and Microsoft - put together - will have consumed more energy than Nigeria (with 224 million inhabitants), or Ireland. And individually more than nations like Croatia, Jordan or Puerto Rico.

The hunger of AI

.Let us, however, take a step back. We were talking about artificial intelligence, which is certainly 'guilty' of this ravenous demand for energy. The large data centres that run behind AI, after all, require massive doses of energy for their calculations. So much so that many carbon-neutral projects have been put on the back burner (according to a study published by Standard & Poor's, the divestment of coal-fired power generation was 40% lower than expected by 2023) while waiting for better times.

According to a recent estimate from the Vrije Universiteit in Amsterdam, the entire artificial intelligence industry could consume between 85 and 134 Terawatt hours per year by 2027. And although the various models of GenAI have already undergone some interesting slimming treatment in terms of consumption, the estimates do not ease the doubts about the long-term sustainability of this technology.

A recent study published in Medium found, for example, that OpenAI's GPT-4 training utilised up to 62,000 megawatt hours, equivalent to the energy needs of 1,000 US households over five to six years.

New chips needed

.The point is that current chips are definitely energy-intensive. Nvidia's H100 microprocessor - which is the most sought after and also used throughout the AI world (it drives ChatGPT and other GenAI systems) - consumes around 700 watts. And a small data centre has at least 400 of these chips in it (while a large one has as many as 8,000). A huge amount of energy, which is turning the need for less energy-intensive chips into an emergency. The risk, trumpeted by many, is that AI development may soon come to a standstill because it is not sustainable.