Real-time translation lands on smartphones, glasses and meetings. Voice and identity saved (almost)

The novelty is the ability to translate while maintaining the timbre and voice identity of the user (voice mimicry). Apple and Meta, on the other hand, focus on 'live' translation via earphones (AirPods) and smart glasses (Ray-Ban).

A business call or a video conference in a foreign language; a trip abroad: these are easier experiences now, for those who do not know the idiom of the interlocutor well. Thanks to real-time translations made by artificial intelligence. Yes: it can be said that AI has more or less broken down this language barrier, even if there is still a way to go for perfection. And the good news is that Italian (translations to/from our language) is widely supported.

It is significant that all the big tech companies are now in the field: Meta, Apple, Google, Samsung, Microsoft. See Google, which with the new Pixel 10 has introduced 'Voice Translate': a function that translates phone calls between English and ten other languages while retaining the user's original voice (the timbre and in theory also the expressions or emotions, although it is still fallacious on this). The operation is carried out 'on device', thanks to the Tensor G5 chip and the Gemini Nano AI models, thus with less latency and protecting privacy. In practice, the interlocutor hears the caller's voice, but in his own language.

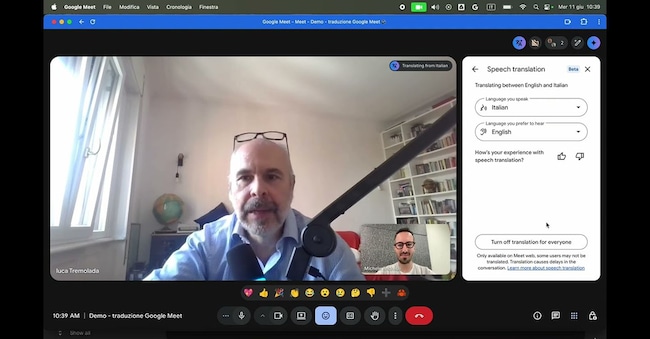

It is an important technological step: the 'mimic' voice preserves (more or less) the communicative identity. The same technology is extended Google Meet, for business video conferences.

Apple is also moving in the same direction, but with a different approach: centred on 'live' experiences. With the latest version of iOS, the Live Translation function arrives on AirPods earbuds, here allowing real-time translation of in-person conversations. The user can activate the mode with a gesture or with Siri, and hear the translation directly in the earbuds while talking to someone using another language. All this, again, with local computation to protect privacy. It is an example of how Apple aims to make technology invisible, turning a complex function into a natural gesture.

Meta has yet another vision of the future: it has brought voice translation out of the smartphone and into Ray-Ban (second-generation) glasses, where the integrated AI assistant is able to translate what the user hears or says, returning the translation via the built-in speakers. Meta believes in a horizon in which augmented reality will free us from screens; it will serve not only to display visual information, but also to mediate the real world linguistically. Google is already following the same path with its Android XR-based glasses projects.