When the Internet was us: now only algorithms surf. Happy Birthday 'WorldWideWeb'

Thirty-five years after the HyperText Project, we have entered the era of the web-agent that may no longer have humans

The birth of the web has been a debated issue for years. Not least because of the newspapers, ravenous for anniversaries to celebrate. 13 November 1990, for example, is the day when Tim Berners-Lee, at CERN in Geneva, together with Robert Cailliau, officially presented a proposal for the development of the system that would become the World Wide Web: an Internet-based hypertext environment, accessible via a browser, capable of linking documents and resources through links. The document was entitled 'WorldWideWeb: Proposal for a HyperText Project'.

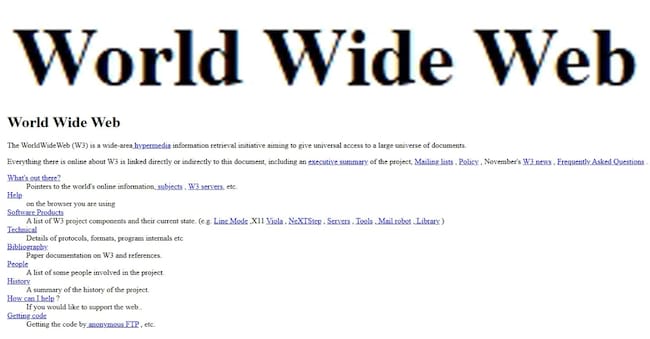

The first WWW page in history was born a year later, in 1991, on 6 August, and was hosted right at CERN, on a NeXT computer, and contained a wealth of useful information on the project: how to create HTML pages. Lyou can find it here in all its glory.

For a long time in the 1990s, the Internet was the stuff of cultural avant-gardists. Stuff for computer enthusiasts who had lots and lots of time on their hands. The modem, which was usually never far from the PC, was a small grey or beige box with a row of little green or red lights flashing in sequence. Once a phone number was dialled, it would start to croak and whistle. Web pages would load very slowly, one piece at a time. Every time one typed 'wwwsomething', after pressing the enter key, it was often a leap in the dark. Minutes of waiting, perhaps to connect to that distant university, only to find that there was still nothing really interesting on the site.

The Internet was a 'job' that you did sitting on a chair: there were no smartphones yet. It was a desk job, you couldn't write lying down. For younger people it was an activity somewhat related to studying or video games (if you had a PC in your bedroom). At work in the nineties there was sometimes the internet station, maybe next to the fax machine.

In the newspapers or on TV, 'those who surfed the Internet' were called Internet users or, even worse, 'net people'. They were considered something new and different from 'normal' citizens. That is why technology journalists were asked to come up with an odd cut for the time, like the barber who opens a site even though he has no reason to, or the young nerd who broadcasts images from his webcam from home 24 hours a day, or the couple who met online and got married in the real world. Outside the headlines, those who lived on the Internet in the 1990s communicated a lot and freely. There were no big digital platforms yet, no Google to organise online knowledge, no search engines to normalise traffic and no social networks to exploit our likes. We were all a bit freer, because that was indeed a no-man's land, but one that was increasingly crowded with experimenters and the curious.