DeepL Voice-to-Voice and Translator, voice is the new heart of linguistic AI

More than significant acceleration: this is how various experts define the path taken by machine translation systems in recent years, an acceleration driven (obviously) by the evolution of artificial intelligence models and (less predictably) by ever closer integration into mainstream digital work environments.

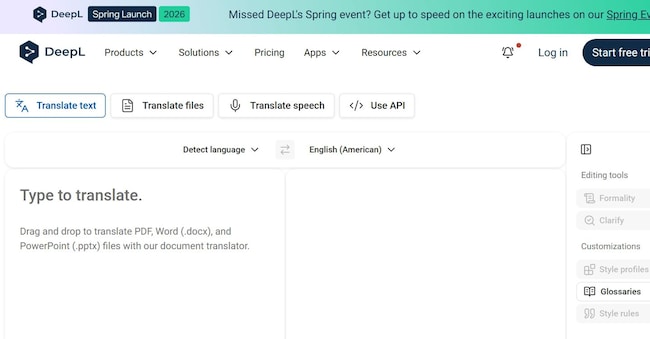

From consumer services to enterprise platforms, tools from Google, Microsoft and other tech companies have progressively shifted the centre of gravity from simple text translation to more advanced forms of interaction, including voice, context and real-time collaboration.

According to various market analyses, the segment of AI applied to natural language is among the fastest growing, driven by the need of companies to operate on a global scale without linguistic frictions and by the increasingly marked perception towards the idea of a 'translation' that from an ancillary service becomes an infrastructural element of digital processes, with direct impacts on productivity and decision-making speed.

Low latency real-time translation

And it is in this context that the new step forward by DeepL, the German start-up that rose to prominence a few years ago thanks to its translation tool of the same name, fits in. Its latest announcement is Voice-to-Voice, a suite that intervenes in one of the most technologically complex areas of linguistic AI, namely real-time spoken communication, and more precisely the 'end-to-end' process that combines speech recognition (speech-to-text), neural translation and speech synthesis (text-to-speech) in a continuous, low-latency flow.

The central issue on which several solutions have run aground in the past is indeed latency, in view of the fact that - to make a multilingual conversation natural - a translation system must be able to capture speech, transcribe it and return it in vocal form within a few seconds, maintaining semantic coherence and fluency.